I’m surprised to be posting again so soon, but the evolution of a project I worked on from 2017 to 2019 has finally come to light.

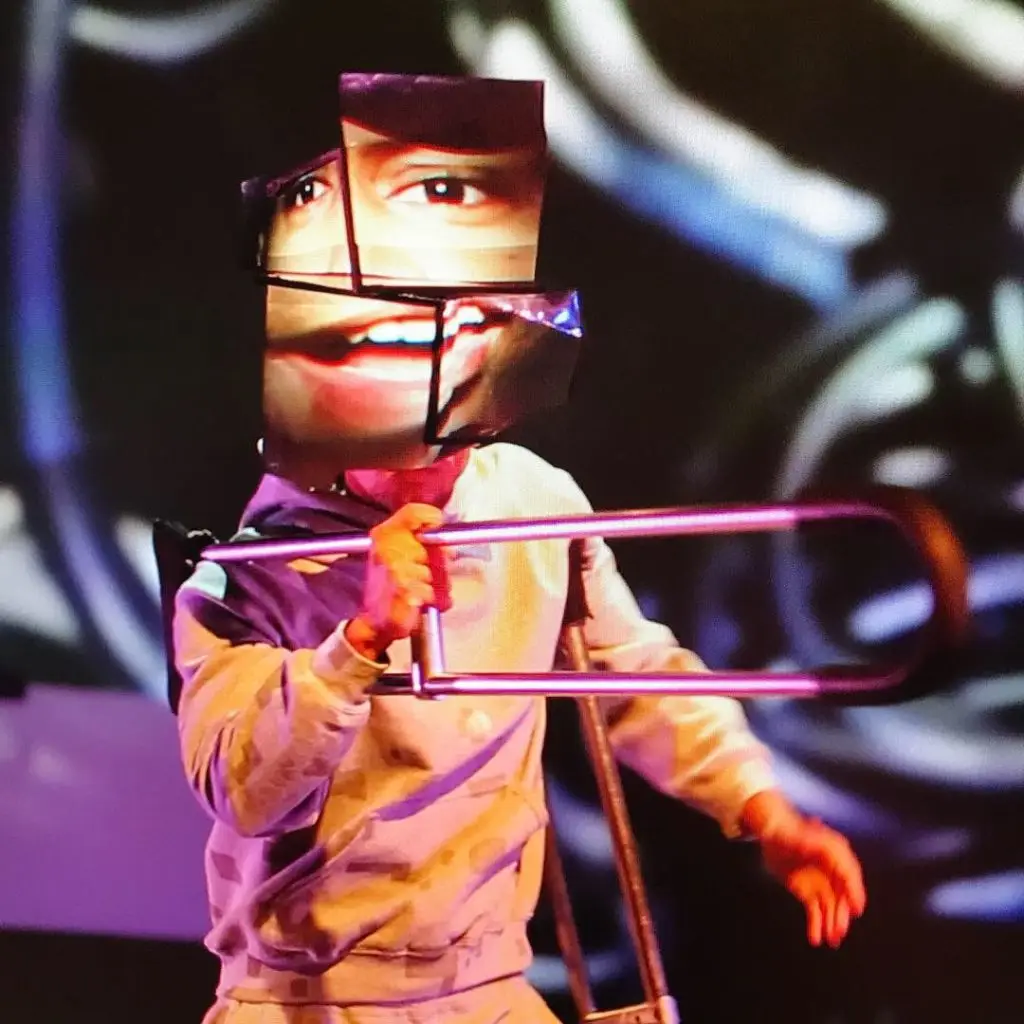

In 2018 I worked in collaboration with Bill Shannon and David Whitewolf, to bring a new version of the projection mapping mask to life for Sterling Fox // Baby Fuzz. It was an evolution of the previous mask that would adjust to multiple positions for singing and performance, and used a brighter projector 200 lumen laser instead of 75 lumen.

Bill Shannon collaborated with Sterling and David to bring the design to life, and I focused on the computing aspect that uses a raspberry pi for playback. The projector and pi both run off rechargeable batteries built into the mask, and the entire thing is lightweight, self contained, and triggered with OSC over WiFi. I made a custom TouchOSC template (similar to the one I made for the PocketVJ) that triggers videos with a tap of a cell phone.

Laser pico projectors were key to DIY design of all three masks for the “infinity focus” effect of the projectors (note: not all laser projectors work in this way). In the image below, you can see that there is a “correction lens”, but not a typical lens one might find in a traditional projector.

Thanks to Phil Reyneri for this image.

The lack of “lens” in the laser projector allowed us to put a wide lens of our own on the front of the pico projector. To achieve full face coverage in the first generation of the mask V1, we had to use two pico projectors and bounce the light off optical mirrors at the back of the mask.

In Mask V1, two projectors required two sources of media. Enter iPod touches 🙂 — They were not the most affordable option, but they would work off the shelf with an apple store app called “Projection Bomber” which no longer exists.

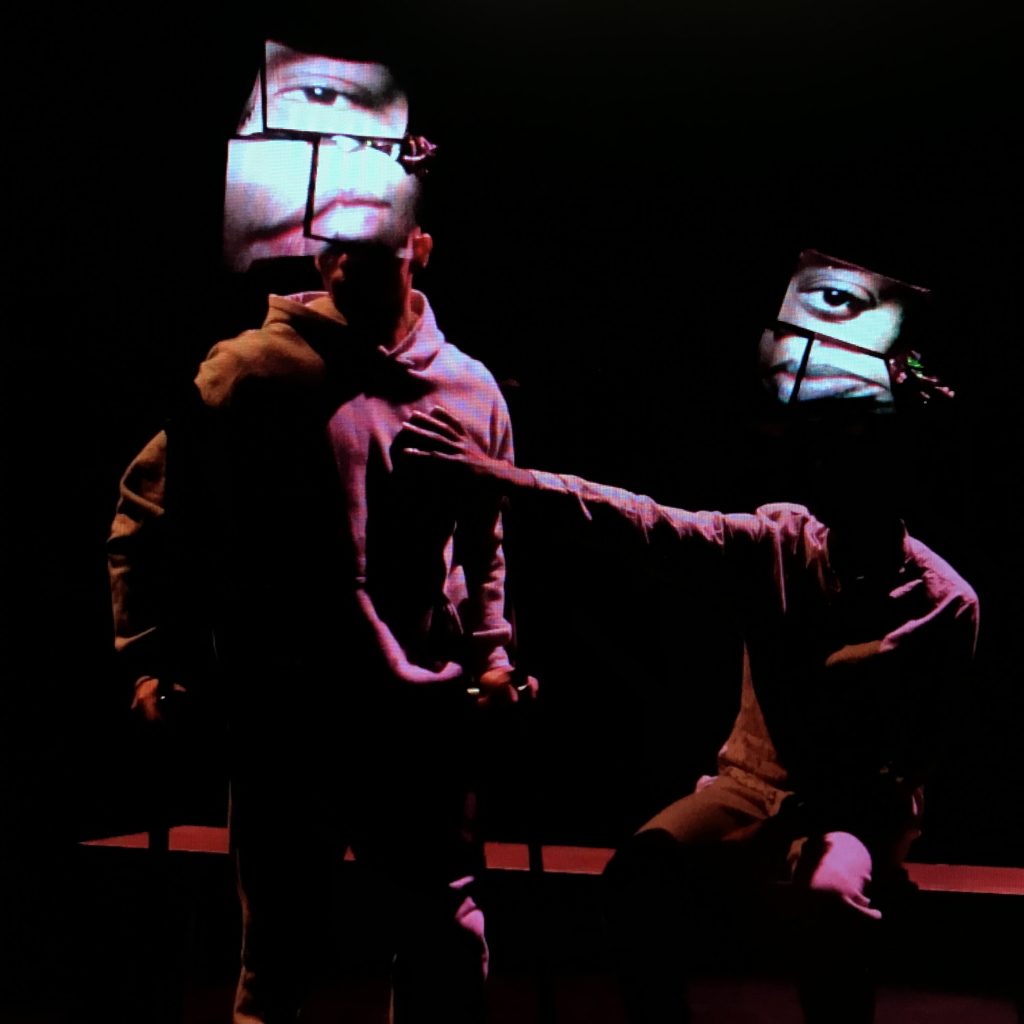

With Mask V2, needed a fresh approach. The goal was to have multiple masks trigger and sync during live performances.

Thanks to the support of The Studio for Creative Inquiry at CMU we were given a space and resources to work on the second version of the mask.

I reached out to MicroVision and got an evaluation sample to prototype.

To drive the media for each mask, I started with a Raspberry Pi Zero W (w/ WiFi). Getting quality audio out of the mask forced us to use an Adafruit speaker bonnet. From there all we needed was to sync over WiFi and trigger with OSC. 🙁 (sad face). It turned out that the zero worked great as a standalone headset, but ran into audio/video issues when attempting to sync.

I reached for a tool I’ve reviewed before. The PocketVJ (PVJ) by Marc-André Gasser. It proved that the WiFi sync and OSC triggering of the Raspberry Pi 3 would work for our needs.

From there I did a custom build of the PVJ using Qlab to trigger OSC over WiFi. (note: Qlab is a preferred standard for the spaces we were working with. Please see this article if you are curious about using Qlab for OSC )

Ultimately, after a run in with an over congested 2.4 GHz WiFi spectrum in Philadelphia, we synced the final show playback over ethernet and unplugged the performers before they entered the stage.

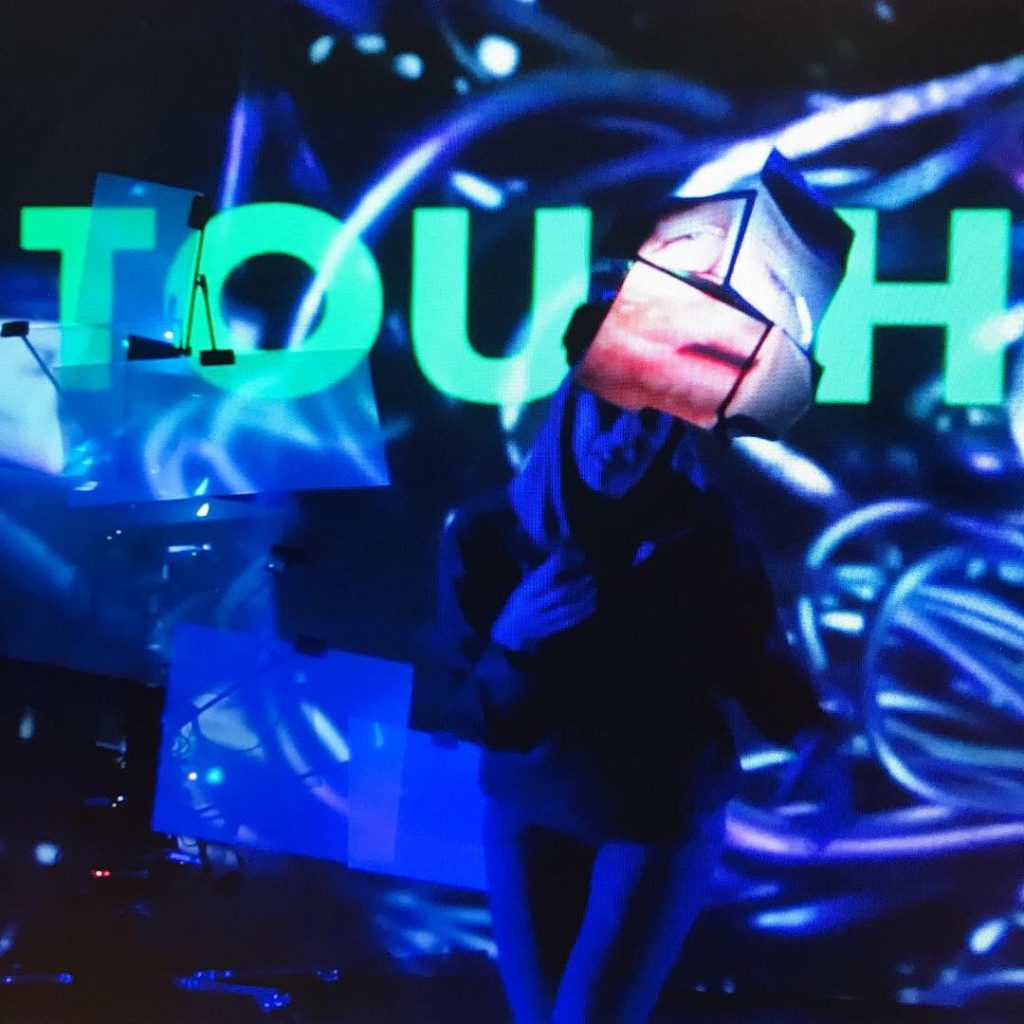

In 2019, a looped version of the V2 masks were on display in the Miller ICA Gallery:

Mask V3 used a similar build, but more durable, multi-positional, brighter, and controlled by a phone or tablet.

In 2020, with a pandemic lingering all around us. It’s a great feeling to see such a cool project debut in such a beautiful music video. (Thanks to Nesto and their team for that)

Thanks to Baby Fuzz and the music video team for making it happen.

References: Article I wrote for PMC about Mask V1